Koh Takeuchi

Projects “presto - sakigake”

Frechet Kernel for Trajectory Data Analysis (SIGSPATIAL2021)

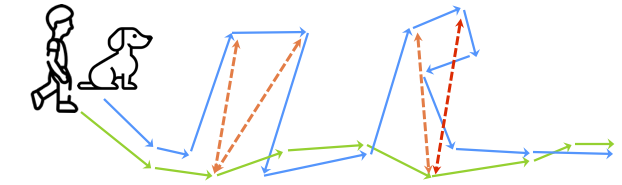

移動体の軌跡データ解析において移動系列の類似度を測るために空間データ解析分野で広く使用されている、フレシェ距離やハウスドルフ距離を滑らかにした軌跡カーネルとその高速演算アルゴリズムを提案。自動車・自転車・歩行者・野生動物・スポーツ選手などから得られたデータを用いた(3-C) 実軌跡データ解析への応用を行い、実験結果から軌跡カーネルの優位性を示した。本研究は、空間データ解析分野の国際会議 ACM SIGSPATIAL 2021(The ACM SIGSPATIAL International Conference on Advances in Geographic Information Systems 2021)に採択されました。

[Proceedings: https://dl.acm.org/doi/10.1145/3474717.3483949]

[code: https://github.com/koh-t/frk]

@inproceedings{10.1145/3474717.3483949,

author = {Takeuchi, Koh and Imaizumi, Masaaki and Kanda, Shunsuke and Tabei, Yasuo and Fujii, Keisuke and Yoda, Ken and Ishihata, Masakazu and Maekawa, Takuya},

title = {Fr\'{e}chet Kernel for Trajectory Data Analysis},

year = {2021},

isbn = {9781450386647},

url = {https://doi.org/10.1145/3474717.3483949},

doi = {10.1145/3474717.3483949},

booktitle = {Proceedings of the 29th International Conference on Advances in Geographic Information Systems},

series = {SIGSPATIAL ’21}

}

概要:

Trajectory analysis has been a central problem in applications of location tracking systems. Recently, the (discrete) Fr\'{e}chet distance becomes a popular approach for measuring the similarity of two trajectories because of its high feature extraction capability. Despite its importance, the Fr\'{e}chet distance has several limitations: (i) sensitive to noise as a trade-off for its high feature extraction capability; and (ii) it cannot be incorporated into machine learning frameworks due to its non-smooth functions. To address these problems, we propose the Fr\'{e}chet kernel (FRK), which is associated with a smoothed Fr\'{e}chet distance using a combination of two approximation techniques. FRK can adaptively acquire appropriate extraction capability from trajectories while retaining robustness to noise. Theoretically, we find that FRK has a positive definite property, hence FRK can be incorporated into the kernel method. We also provide an efficient algorithm to calculate FRK. Experimentally, FRK outperforms other methods, including other kernel methods and neural networks, in various noisy real-data classification tasks.